In our report on the state of competitive machine learning in 2022, we review the 200+ data science competitions that took place in 2022, and we analyse Python packages used by 40 winners in their solutions.

Here we take a closer look at the most popular packages used by winners - what we’ll call the winning toolkit.

The PyData stack

The PyData stack is a collection of Python tools for data analysis, visualisation, machine learning, and more.

Numpy

NumPy is the workhorse package for scientific computing, data science, and machine learning in Python. A highly performant array library implemented in C, NumPy underpins much of the rest of the ecosystem — doing much of the work behind the scenes in Pandas, and being the default array type for other packages like scikit-learn and SciPy. All of the solutions for which we found Python package information used NumPy.

Pandas

Pandas provides a higher-level DataFrame, built on top of NumPy arrays, as well as useful tools for reading and writing data, merging/joining data sets, and various aspects of time series analysis. Almost 87% of winning Python solutions imported it in their code. For more detail, see the section on dataframes.

Scikit-learn

scikit-learn is the go-to library for machine learning in Python. As well as having

implementations of key classification, regression, and clustering algorithms, it has a large variety of useful

utilities for data preprocessing, transformation, building pipelines, cross-validation, and implementing common metrics.

It is imported as sklearn. 82% of winning Python solutions imported it.

Scipy

SciPy is a library that extends NumPy, providing additional tools and fundamental algorithms like optimisation, interpolation, measuring correlations, and sampling from distributions. It was the 7th-most imported package.

Matplotlib

Matplotlib is the default plotting library in the PyData ecosystem, and is used within scikit-learn, as well as other libraries, and works well with Pandas and NumPy. It was the sixth-most imported package in winning solutions, with 63% of winning solutions importing it.

Seaborn

Seaborn is a plotting library built on top of matplotlib, providing a higher-level interface focused on statistical graphics. It requires less configuration and has good default settings which make plots look more attractive without requiring additional styling. Almost 30% of winning solutions imported Seaborn.

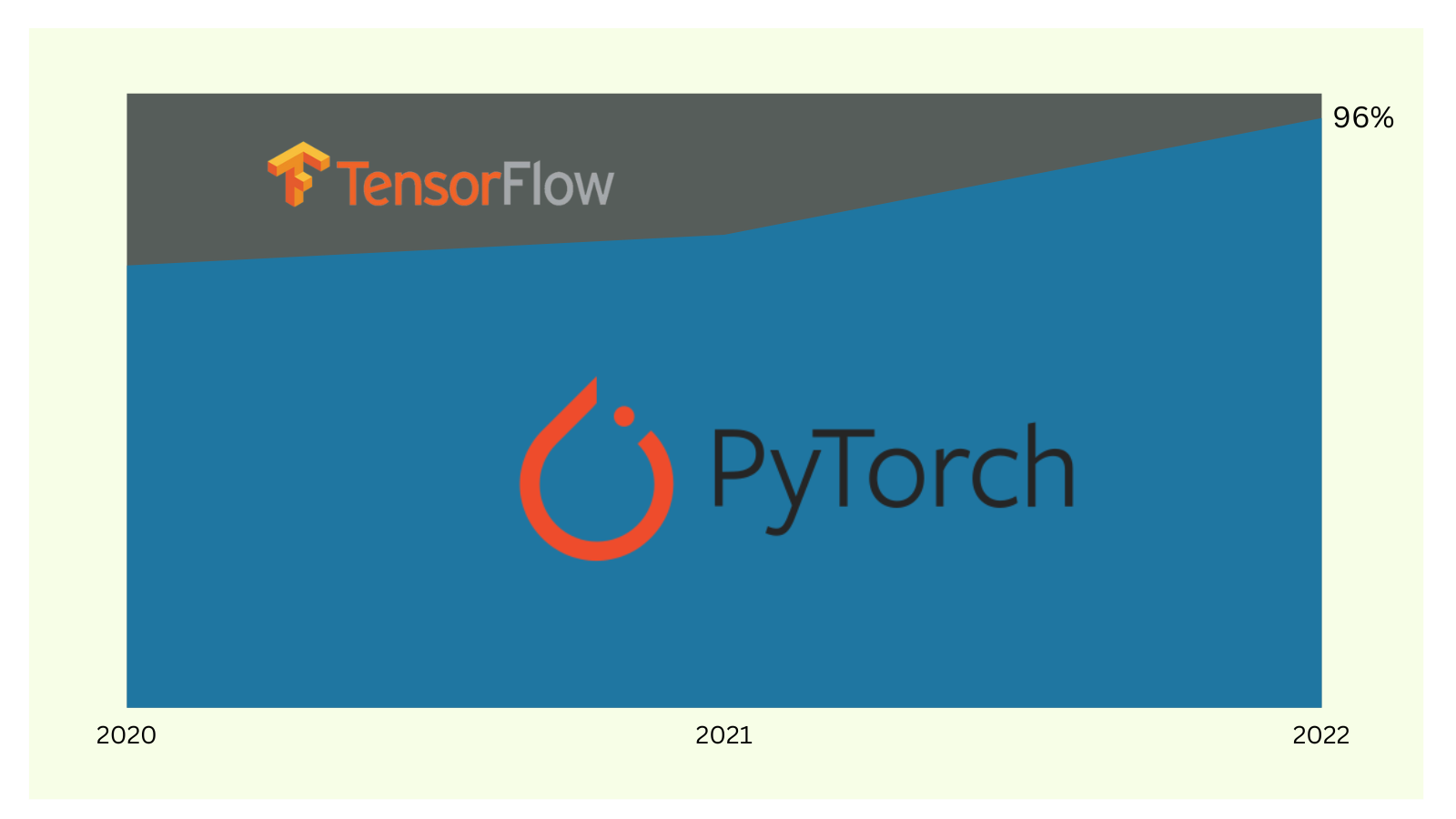

Deep Learning

PyTorch

PyTorch has quickly become the de facto package for deep learning in Python. Having been created by Meta (then Facebook) in 2016, it became part of the Linux Foundation in September 2022. 87% of winning Python solutions imported PyTorch, and 96% of winning solutions which used deep learning used PyTorch. As well as being the clear favourite for NLP and Computer Vision problems, PyTorch models are often used for tabular problems. It’s possible to train most PyTorch models on CPU, but a GPU is practically a must when doing deep learning.

While there are good alternatives (notably TensorFlow), PyTorch is by far the most popular both for competitive machine learning and ML research in general.

Gradient Boosted Decision Trees

Gradient boosted decision trees (GBDTs) are a class of methods for iteratively building strong prediction models by combining many weaker prediction models, specifically decision trees. They have been a staple in competitive machine learning since the introduction of XGBoost in 2014. All of the main GBDT libraries support GPU training and have bindings for multiple languages, including C++, Python, R, as well as sometimes Java, C#, and Julia. They support both classification and regression models. The differing algorithms and implementations between libraries lead to trade-offs between predictive accuracy, training efficiency, and inference efficiency. This article provides a great primer on how they work.

LightGBM

LightGBM, created by Microsoft in 2016, hasn’t been around for as long as XGBoost, but seems to be the current favourite among winners. Almost a quarter of the code solutions we found used LightGBM, and the total number of mentions of LightGBM by winners in their solution write-ups or to our questionnaire is the same as those of CatBoost and XGBoost combined.

CatBoost

CatBoost, developed by Yandex and initially released in 2017, provides additional support for categorical features. It was the second-most used GBDT library in 2022, after LightGBM.

XGBoost

XGBoost, created by Tianqi Chen, is the library that initially popularised GBDTs for Kaggle when it was released in 2014, and is often still seen as the gold standard for tabular data. However, in 2022, it was only the third-most popular GBDT library in winning solutions, after LightGBM and CatBoost.

Experiment Tracking and Hyperparameter Optimisation

Optuna

Optuna is a hyperparameter optimisation library which can be used with any modelling framework - including PyTorch, scikit-learn, XGBoost, LightGBM, and others. It makes it easy to efficiently search for the optimal hyperparameters, and takes care of parallelisation. While Optuna isn’t the only library which can do this, it was very clearly the most popular in 2022 — with around 10% of all winning solutions importing it, and 30% of winners who did any kind of hyperparameter optimisation using it. (61% of winners did manual hyperparameter optimisation, and the remainder used either genetic algorithms or a custom automated hyperparameter tuning approach)

Weights & Biases

Weights & Biases is an MLOps platform which makes it easy for data scientists to track experiments, and track and version data. We found that 13% of winning solutions imported their wandb Python library. It’s worth noting that while W&B does have a free tier for personal use, unlike the other libraries mentioned here their tools are not open source. For an open source alternative, try mlflow.

NLP

Transformers

Transformers are at the core of all NLP modelling these days (see NLP Solutions), and Hugging Face’s aptly named Transformers library makes it easy to create NLP pipelines, download pre-trained models, and fine-tune them. Every single winning NLP solution we found imported Hugging Face’s transformers library.

Computer Vision

Albumentations

Albumentations is a Python library for fast and flexible image augmentations. Image augmentation is a key part of computer vision modelling — often used to generate additional training data by transforming existing training data — for example, flipping an image, or adding noise to it, to make trained models more robust. Albumentations supports more than 60 different image augmentations, and is optimised for performance. Half of all winning computer vision solutions we found imported albumentations.

OpenCV

OpenCV is an open source computer vision library that includes several hundreds of computer vision

algorithms. It is mostly written in C++, with bindings for Python, Java, MATLAB, and Octave. It has a wealth of

functionality, but we found winners mostly using it for reading/writing image data, as well as resizing images and

converting between colour spaces. Just over half of all winning computer vision solutions imported it. It’s often

imported as cv2.

Pillow

Pillow — the Python Imaging Library — provides image processing tools.

Just under half of all winning computer vision solutions imported it, often making use of its Image module for things

like adjusting brightness or equalising images. It’s usually imported as PIL, since it’s a fork of a — now defunct —

library with that original name.

scikit-image

scikit-image is a collection of image processing algorithms. A quarter of winning computer vision solutions imported it.

timm

timm is a library containing over 700 pre-trained image models, as well as various other utilities for machine learning in computer vision. It was imported in just under half of winning computer vision solutions.

One additional libraries which was very popular was tqdm, which provides a command-line progress bar. It was imported in over 80% of winning solutions, making it the fourth-most imported package (after NumPy, Pandas, and PyTorch).

More

For more on what makes winners win, read the rest of our state of competitive machine learning in 2022 report.